NEEMS Lecture: 5. Train the Next Action Classifier

In the previous section we talked about some machine learning theory. This section will cover the training of our decision tree model.

First, the prepared_narratives that were created earlier in this lecture, are split between train_set and test_set. Then the train_set is split into the features and labels, where the features contain the previous and parent actions for each action, and the labels contain the data about which action comes after another (with the next_ prefix).

Since all the functionality is already implemented, the test_set can be prepared as the train_set has already been.

#TODO Split the test set into features and labels. Their variables should have the prefix test_set test_set_features, test_set_features_cols, test_set_labels, test_set_labels_cols \ = split_data_in_features_and_labels(test_set) test_set_labels.head()

After preparing the test- and dataset, two suitable parameters need to be decided for, determining the depth and breadth of the decision tree. The parameters that we speak of are within the two range constructions, namely the 9 for the max_depth and 21 for max_leaf_notes. Finding parameters that train the model to an F1 value above 0.9 can be done programmatically by increasing both values, since more depth and nodes result in a more precise model. Taking a maximum depth of 14 and 26 leaf nodes is the lowest setting to reach a score of just above 0.9.

parameters = {'max_depth':range(1,14,1), 'max_leaf_nodes': range(2,26,1)}

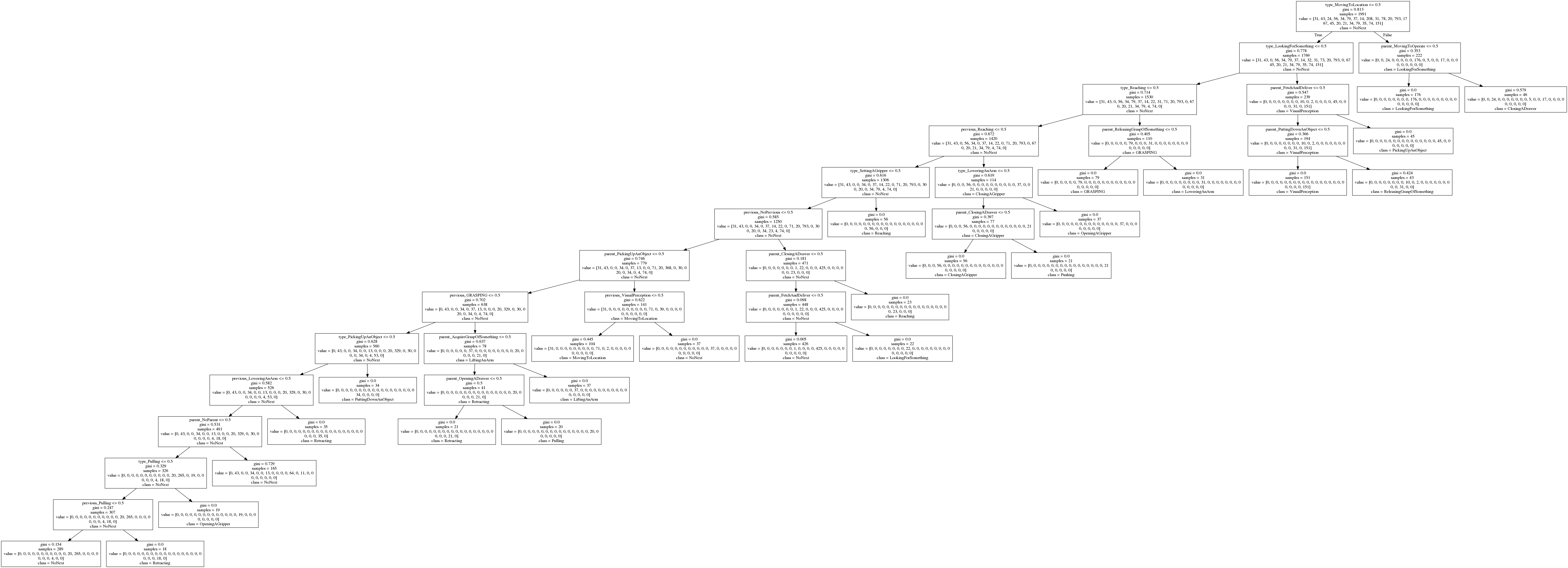

Now if the tree model is exported, a .dot file should be generated in the data directory, where this exercise is downloaded to. By executing the shell command below the export, a PNG image is generated.

# cd to the data directory, then execute this dot -Tpng tree.dot -o tree.png

Open the PNG file to see the decision tree of the model we just trained. With the parameters used above, the generated decision tree looks like this.

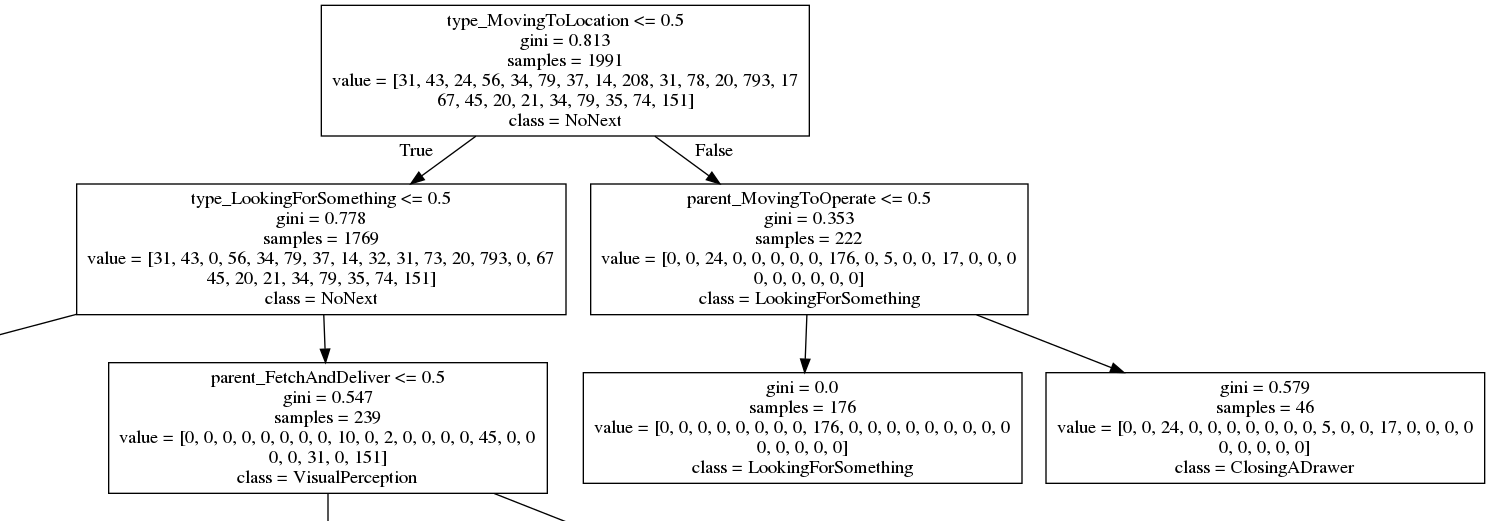

Zooming in to the root node we can see the fist decisions made. The root contains the decision, that gains the most information. First the type of the current action is determined, then the higher-order parent action. Both information finally lead to a concluded next action in one of the leaf nodes.

Depending on the highest entry in the value list in a node, the most likely next action is determined, which is NoAction in the root node, since this is the best prediction so far, without having made any decisions. Also, the Gini score shows very well how certain a decision have been made at any point in the tree, where we begin with a pretty high score of 0.813 at the root.

1.) Say, the type of action we currently try to predict something for is MovingToLocation (value higher than 0.5). From the root node we would go down the right branch (false), since type_MovingToLocation <= 0.5 is false.

2a.) The next decision to be done is based on whether the parent is MovingToOperate and let's say it is not (value below 0.5). Also recognize, that the class entry on the node changed to LookingForSomething, since this is the most likely next action so far. When parent_MovingToOperate <= 0.5 evaluates to true the branching goes on to the left, finally hitting a leaf node.

3a.) The model predicts, when the type of action is MovingToLocation and its parent is not MovingToOperate, the most likely next action to follow will be LookingForSomething since this is the only entry in the value list. With a Gini score of 0.0 this decision is unlikely to be wrong, based the data we have.

2b.) In a different scenario the parent_MovingToOperate is above 0.5, meaning the decision would evaluate false. We now hit a different leaf node to the right.

3b.) Even though the value list has multiple positive entries, the highest value is ClosingADrawer, which would be the prediction in a scenario where type is MovingToLocation and parent is MovingToOperate. You can see that with a Gini Score of 0.579 the prediction is not very certain, since there is at least one other high entry in the value list, representing the OpeningADrawer action. But with the features given, this is still our best prediction.

Finding the right parameters is one of the hardest things when it comes to training a model. Achieving a Gini score of 0.0 in every single leaf might indicate that the model is heavily overfit. On the other hand, having a model too vague may result in generalized prediction with small variance. By reducing the feature space just right, the predictions will be certain enough for your purposes.

If you are interested in specific entries in the value list, there will be an illustration in the next chapter, where the values are represented in a confusion matrix. The labels of said confusion matrix are in the same order as the value list, such that you can investigate what other classes could have been predicted aside from the highest value.

In the last section we will evaluate the trained decision tree model.