Table of Contents

Accessing Episodes recorded in Virtual Reality

This tutorial will teach you how to access the events and the data in the episodes that have been recorded previously in the Virtual Reality environment.

Abstract

In order to enable robots to perform every day activities with ease, they need to know when to do what. And who could be a better teacher then the human who wants the robots to perform these tasks? But instead of having us humans explain to the robots what to do, it is easier to just show them in a Virtual Reality environment, since the VR world can easily be adapted to whichever tasks we want to teach.

In the following video we can see how a human performs everyday activities within the VR environment.

RobCoG from Andrei Haidu on Vimeo.

Before we can teach the robots though, we have to understand what kind of data is being recorded and how we can access and inspect it, before we decide which parts of it to pass on to the robots. This tutorial will aim at teaching how to interact with episodic memories and inspect the data stored within using Open Ease and Prolog queries.

Introduction

We can record everything the human does in a Virtual Reality environment fairly precisely. The position of the head of the human can be tracked by tracking the headset itself, while the position of the hands can be mapped to the position of the joysticks.

Every interaction between the hands of the human with the virtual environment is recorded. We can replay these recordings (episodes) and inspect them, learning from them how a human does a certain everyday activity. Why do we do tasks in a specific order? (For instance, in a table setting scenario most people would put the plate down first and then get the cutlery.) How do we place objects? What orientation of objects do we tend to prefer?

All of these things are small subconscious decisions we are not necessarily aware of since we are just used to do things a certain way. How should a robot know them? This is where episodic memories come in. We can use the recorded data from Virtual Reality to teach robots to do everyday activities without having to hard code every small little detail into the program of the robot (at least that's the goal). Before we can get there though, we need to understand and see how the data is stored, what we can learn from it, and what information can be obtained in the first place.

Tutorials

Setup the Tutorial Environment

Go to the website http://data.open-ease.org, preferably using Mozilla Firefox or Chrome (other browsers could work as well but have not been tested). You do not need to log in into the website to complete this tutorial. In the upper right corner, select Experiment Selection and from the appearing drop-down menu select VR Breakfast (EASE) Milestone.

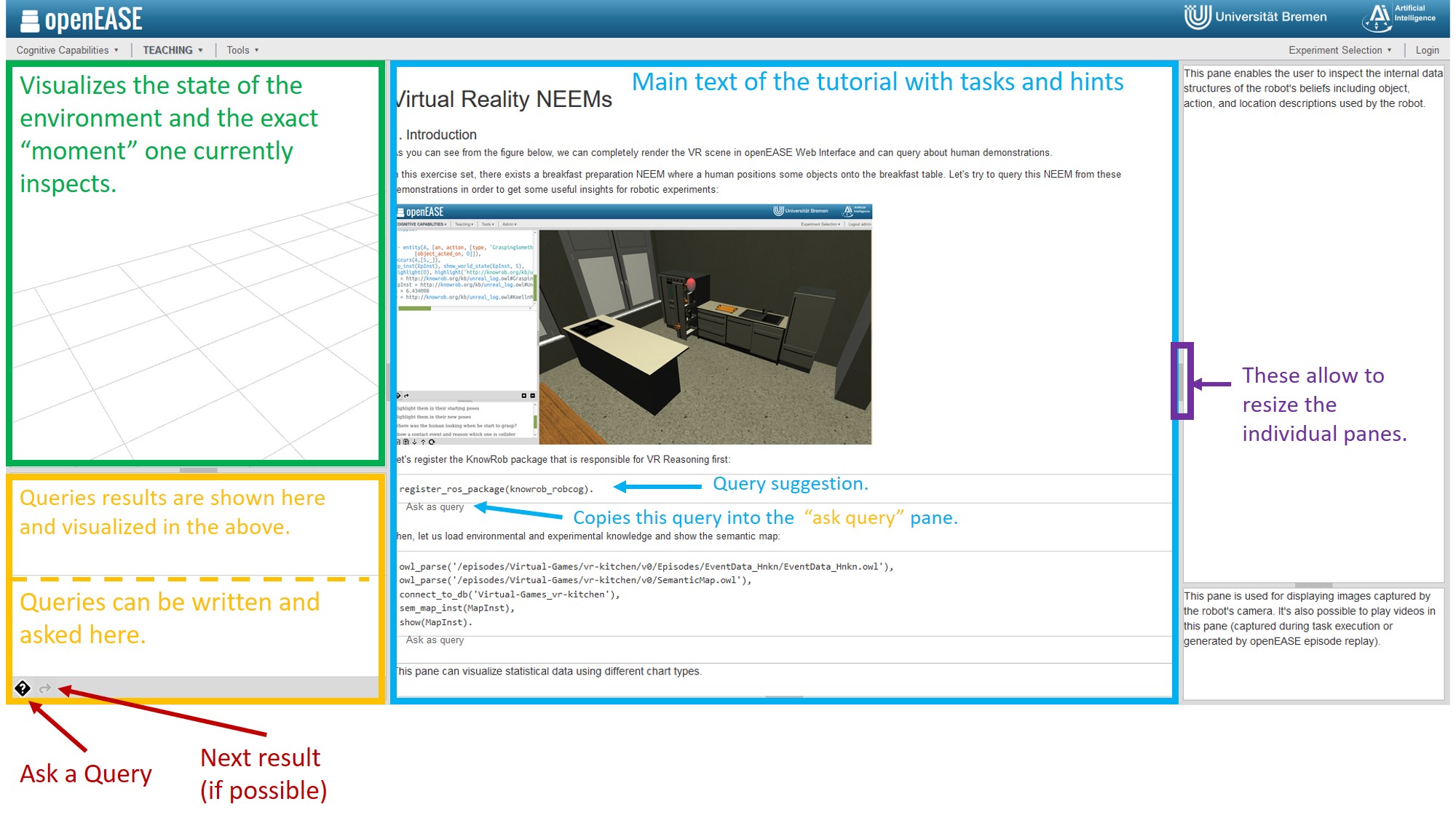

Click on Teaching in the menu bar, Tutorials, and in the tutorials overview select Fall School VR Tutorials. Read and do this overview Tutorial which should look like this:

The tutorial will explain a few basics, as well as describe the goals of the following tasks. If you want to get more familiar with OpenEase first and this is your first encounter with OpenEase, KnowRob, and Prolog, it is advisable to also read and do the other tutorials available in the tutorial overview.

The following description comments on the build-in Virtual Reality NEEMs Tutorial within OpenEase, by trying to explain in more detail what certain queries do and why they are used the way they are. You can of course try to complete the Tutorial on your own without the information which is provided here. It's up to you.

In order to be able to visualize what happened within an episode, we first need to load the environment in which that episode took place, as well as the episode in question. In short: we need to tell OpenEase what to load from the database and that we want the result to be visualized. In order to achieve this, select the Initialization query from the suggested queries in the bottom left corner. The entire query will then appear in the query editor so that we can inspect which individual queries are called and edit them if needed be. In this case, we can just keep the query as-is and submit it with the little button labeled with a question mark.

Now the environment, in this case a kitchen, will be loaded in the visualization overview panel. This might take some time to load, it can even take up several minutes, so don't worry if it is slow or some objects just appear as black cubes. This is normal.

Now let's discuss a few of the queries we have used so far:

register_ros_package(knowrob_robcog).

This query just loads the knowrob_robcog package, which contains some VR and NEEMS specific queries.

owl_parse('/episodes/Virtual-Games/vr-kitchen/v0/Episodes/EventData_Hnkn/EventData_Hnkn.owl'), owl_parse('/episodes/Virtual-Games/vr-kitchen/v0/SemanticMap.owl'), connect_to_db('Virtual-Games_vr-kitchen'), sem_map_inst(MapInst), show(MapInst).

Now these are a few queries chained together by a , which acts as an and in prolog. The fullstop . at the end is also very important. It signals the end of the query and you might get an error message if you forget to add it.

owl_parse parses the path of the EventData.owl file and the SemanticMap so what OpenEase knows which episode to load. The EventData contains every event that happened in the episode. Every action like pick and place etc. is recorded as an Event in the EventData. The SemanticMap contains the initial state of the world, including which object is where, and how the world is set up. Aka if you do experiments in a kitchen, it will describe where all the kitchen Furniture and objects are, where the meshes are located etc. With these queries, this information gets loaded into OpenEase.

connect_to_db connects to the MongoDB database which contains all the poses of the objects during the Events. The poses are mapped to timestamps.

sem_map_inst asks for the current instance of a SemanticMap.

show visualizes the SemanticMap in the visualization pane.

Note how after executing these queries you also get their results in the result pane above the question answering pane. In this case, we only had one variable, namely MapInst (Everything starting with a capital letter is a variable for Prolog). This variable now contains the map instance of the semantic map that we have loaded and represented it like this:

MapInst = http://knowrob.org/kb/u_map.owl#SemanticEnvironmentMap_iW6S

Whenever we use variables, it can always be the case that there are multiple solutions to one question. If you want to know if this is the only solution or if there are more, click on the little arrow button right next to the question mark button. There are solutions as long as you are able to click the button, and as long as you do not get a true or false statement in the result pane.

Tasks and Exercises

Now, let's see if you can figure out the following questions by yourself. There can be more then one way to ask the queries to obtain the result, so if you come up with a different solution then suggested here, that's totally fine. You can try to solve these without any hints at all. In the following, first the task questions will be asked. Then there will be a secion with hints and usefull queries which might help to solve these tasks, and after that you will find the solutions.

Task Questions

Task 1: Which type of objects are brought by the demonstrators?

Task 2: What are these objects' initial poses?

Task 3: What are their final poses?

Task 4: Highlight the human when he starts to grasp an object

Usefull Predicates / Queries / Cheat Sheet

Here are some usefull queries that might help you to solve the tasks. They are sectioned in two parts. The first contains general queries, the second ones are more NEEM and VR specific. This only means, that if you want to use them, you need to load the knowrob_robcog package first, which was the first step in the VR tutorial on the OpenEase website. Generally it is suggested to just try some predicates out to get a feeling for how they work and interact with each other, and build your solution query step by step.

general Predicates

entity(Action, [an, action, ['task_context', Context]]).

Definition: returns Action whose task context is Context variables: Context → Bound Action → Unbound

get_divided_subtasks_with_goal(Action, Context, SuccInstance, FailedInstances).

Definition: If an action, Action, has multiple subactions with the context Context in a way that it tries this subaction until it succeeds, this predicate returns successful instance and failed instances Variables: Action, Context → Bound; SuccInstance, FailedInstances → Unbound

task_start(Act, St) & task_end(Act, End).

Definition: returns start and end time point of given action, Act Variables: Act → Bound; Start, End → Unbound

entity(Base, [an, object, [srdl2comp:urdf_name, 'base_link']]).

Definition: returns base link individual Variables: Base → Unbound

object_pose_at_time(Obj, TimePoint, mat(Pose)).

Definition: returns Obj’s pose at TimePoint. For non-moving objects, you can use 1 as TimePoint. Variables: Obj→ Bound; Timepoint → Bound; Pose → Unbound

owl_individual_of(Obj, Type).

Definition: returns objects with the given type or returns the types of the object. So, both Obj and Type can be bound and unbound Hint: Type that is important to consider: knowrob:’HingedJoint’

transform_between(Pose1, Pose2, [_, _, RelPosition, RelRot]).

Definition: returns Pose1’s position and rotation with respect to Pose2. Variables: Pose1, Pose2 → Bound RelPosition, RelRot → Unbound

findall(Var, (PrologQuery), ListOfVar).

Definition: finds out all of the solutions of PrologQuery. Then, it stores all of solutions of the unbound Var inside the List ListOfVar.

jpl_list_to_array(List, Array).

Definition: converts Prolog List to Java Array.

append(List1, List2, NewList).

Definition: appends two lists together

generate_feature_files(FeatureArrArr, Path)

Definition: given an float array of array, write this as a feature file (with CSV extension) into the given path

VR/NEEM specific Predicates

These are some queries which are within the knowrob_robcog package or rather NEEM/VR specific. So they might not always be defined if used with other data but for this tutorial they can be very useful. You can replace the variables with whatever you like, but these names provide hints as towards what they can be used for in the tasks.

ep_inst(EpInst).

Definition: returns the instance of an Episode.

u_occurs(EpInst, EventInst, Start, End).

Definition: Given the Episode Instance, returns any occured Event Instance with the correspoding Start and End time stamps of the Event.

obj_type(EventInst, knowrob:'GraspingSomething').

Definition: Returns an Event Instance in which an Event of Type GraspingSomething occured. Can be also used to return an object of a specific type.

rdf_has(EventInst, knowrob:'objectActedOn', ObjInst).

Definition: Checks if rdf has an Object Instance within the given Event Instance with the propery objectActedOn.

iri_xml_namespace(ObjInst, _, ObjShortName).

Definition: Cuts of the Namespace-prefix of the Object Instance, returning it's short Name.

actor_pose(EpInst, ObjShortName, Start, PoseObjStart).

Definition: returns the Pose of the Object (ObjShortName) during a specific Timestamp (Start) from the given Episode Instance. The Pose will be an array of seven values. The first three are the x y z coordinates, while the last 4 are x y z w quaternion values.

Note: You need to use the iri_xml_namespace query to shorten the name. Otherwise this query might not work properly.

show_world_state(EpInst, Start).

Definition: visualizes the world state of a specific Episode Instance during a given Time Stamp.

highlight(Object).

Definition: Highlights a specific Object Instance in red within the visualization pane.

Solution suggestions

The following contains some solution suggestions. In the first task, we will show multiple solution methods, to showcase how different some approaches can be. After this only one solution will be presented. If you get the same result using different predicates, or even some which are not mentioned above, that's totally fine.

The Idea behind each solution will be briefly explained before the Solution itself is shown. It can also be used as a hint towards finding a solution on your own.

Task 1: Which type of objects are brought by the demonstrators?

Solution 1

Idea: Get an episode instance. Check if the episode has an event instance with a start and end time. Return an event instance which is of type GraspingSomething. Get the object instance of that event, which fulfills the property objectActedOn. Return the Type of the Object. Use Findall to find all the types of objects which have been manuipulated by the demonstrator, return them in a list.

Note: Some of the solutions will contain screenshots which are supposed to show the visualization of the result and the state of the world. Unfortunately, at the time of the writing of this tutorial, there was a bug which highlights all the objects instead of just one, so that everything appears red. The screenshots will be updated once this is fixed. Still, the position changes of the interacting person and the objects can be seen.

findall(ObjType, (ep_inst(EpInst), u_occurs(EpInst, EventInst, Start, End), obj_type(EventInst, knowrob:'GraspingSomething'), rdf_has(EventInst, knowrob:'objectActedOn', ObjInst), obj_type(ObjInst, ObjType)), ListOfObjectTypes).

Result of the Query:

[...] ListOfObjectTypes = [ 0 = knowrob:KoellnMuesliCranberry 1 = knowrob:KoellnMuesliCranberry 2 = knowrob:BaerenMarkeFrischeAlpenmilch18 3 = knowrob:BaerenMarkeFrischeAlpenmilch18 4 = knowrob:BowlLarge 5 = knowrob:BowlLarge 6 = knowrob:SpoonSoup 7 = knowrob:SpoonSoup ]

Solution 2

findall(_T, (entity(_A, [an, action, [type, 'GraspingSomething'], ['object_acted_on', O]]), owl_individual_of(O, _T)), ListOfObjects).

The result of the query is a very long list with many objects… here is a snippet:

ListOfObjects = [ 0 = owl:Thing 1 = owl:NamedIndividual 2 = knowrob:KoellnMuesliCranberry 3 = knowrob:BreakfastCereal 4 = knowrob:CerealFood 5 = knowrob:Granular [...]

Task 2: What are these objects' initial poses?

Idea: Very similar to task 1. Just without the findall since we only need one instance here. So going from the solution of task 1, the name of the object was shortened. The position of the object at the start of the GraspingSomething event was determined. The world state was updated to show the world at the time of the start of the event. The object should be highlighted.

Solution

ep_inst(EpInst), u_occurs(EpInst, EventInst, Start, End), obj_type(EventInst, knowrob:'GraspingSomething'), rdf_has(EventInst, knowrob:'objectActedOn', ObjInst), obj_type(ObjInst, ObjType), iri_xml_namespace(ObjInst, _, ObjShortName), actor_pose(EpInst, ObjShortName, Start, PoseObjStart), show_world_state(EpInst, Start), highlight(ObjInst).

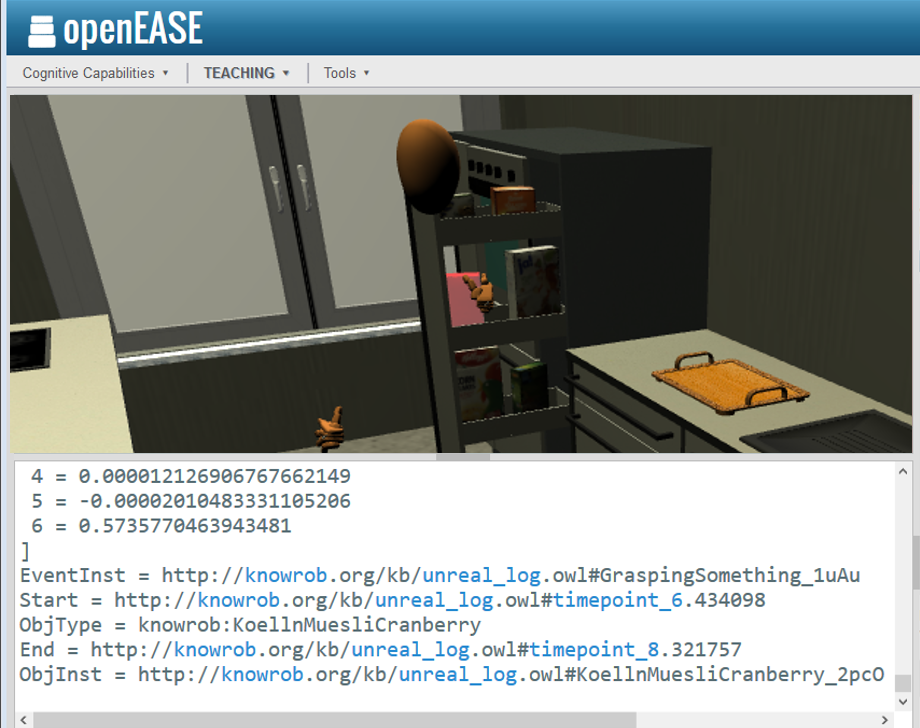

Result:

ObjShortName = KoellnMuesliCranberry_2pcO EpInst = http://knowrob.org/kb/unreal_log.owl#UnrealExperiment_Hnkn PoseObjStart = [ 0 = -3.5357048511505127 1 = -2.2400009632110596 2 = 1.0195969343185425 3 = 0.8191515803337097 4 = 0.000012126906767662149 5 = -0.00002010483331105206 6 = 0.5735770463943481 ] EventInst = http://knowrob.org/kb/unreal_log.owl#GraspingSomething_1uAu Start = http://knowrob.org/kb/unreal_log.owl#timepoint_6.434098 ObjType = knowrob:KoellnMuesliCranberry End = http://knowrob.org/kb/unreal_log.owl#timepoint_8.321757 ObjInst = http://knowrob.org/kb/unreal_log.owl#KoellnMuesliCranberry_2pcO

Task 3: What are their final poses?

Idea: Same as the above except for the timestamp change from start to end.

Solution

ep_inst(EpInst), u_occurs(EpInst, EventInst, Start, End), obj_type(EventInst, knowrob:'GraspingSomething'), rdf_has(EventInst, knowrob:'objectActedOn', ObjInst), obj_type(ObjInst, ObjType), iri_xml_namespace(ObjInst, _, ObjShortName), actor_pose(EpInst, ObjShortName, End, PoseObjStart), show_world_state(EpInst, End), highlight(ObjInst).

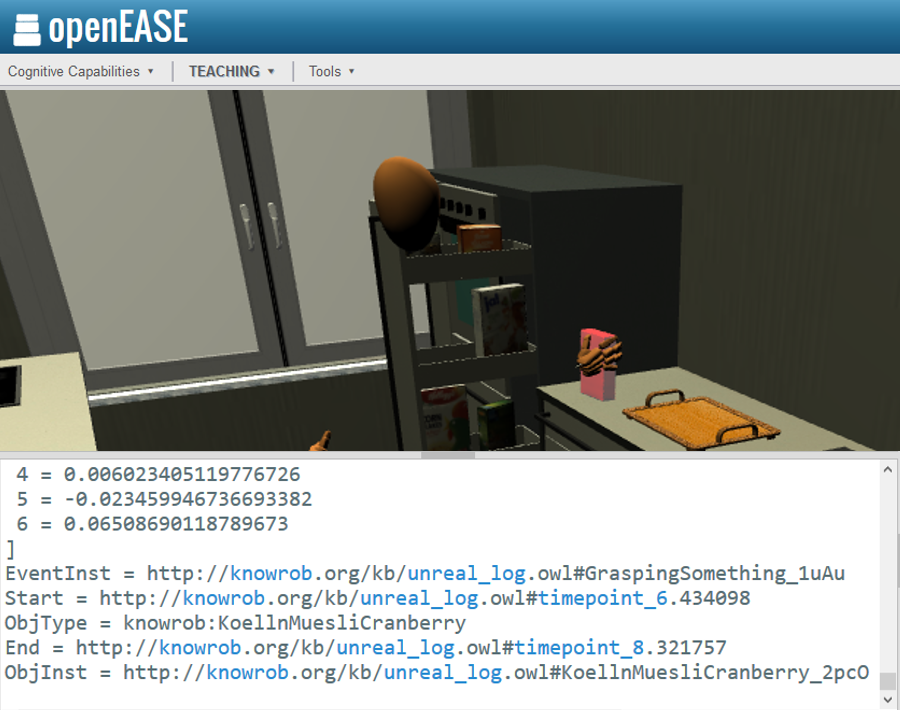

Result:

ObjShortName = KoellnMuesliCranberry_2pcO EpInst = http://knowrob.org/kb/unreal_log.owl#UnrealExperiment_Hnkn PoseObjStart = [ 0 = -3.984745979309082 1 = -1.8089243173599243 2 = 0.9824687242507935 3 = 0.9975854754447937 4 = 0.006023405119776726 5 = -0.023459946736693382 6 = 0.06508690118789673 ] EventInst = http://knowrob.org/kb/unreal_log.owl#GraspingSomething_1uAu Start = http://knowrob.org/kb/unreal_log.owl#timepoint_6.434098 ObjType = knowrob:KoellnMuesliCranberry End = http://knowrob.org/kb/unreal_log.owl#timepoint_8.321757 ObjInst = http://knowrob.org/kb/unreal_log.owl#KoellnMuesliCranberry_2pcO

Task 4: Highlight the human when he starts to grasp an object

Idea: Same as the above. The new change is that we want the position of the person. The person which interacts with the obejcts is refered to as Camera though, since this is where the camera would be. This is important for perception.

Solution

ep_inst(EpInst), u_occurs(EpInst, EventInst, Start, End), obj_type(EventInst, knowrob:'GraspingSomething'), rdf_has(EventInst, knowrob:'objectActedOn', ObjInst), obj_type(ObjInst, ObjType), iri_xml_namespace(ObjInst, _, ObjShortName), rdf_has(CameraInst, rdf:type, knowrob:'CharacterCamera'), iri_xml_namespace(CameraInst, _, CameraShortName), show_world_state(EpInst, Start), actor_pose(EpInst, CameraShortName, Start, CameraStartPose), highlight(CameraInst).

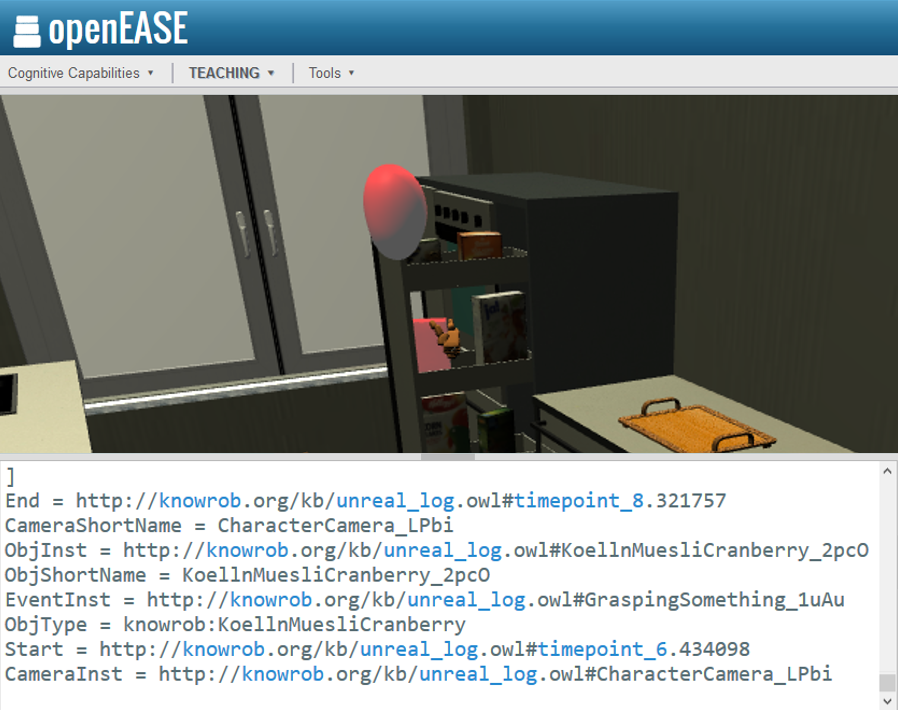

Result:

EpInst = http://knowrob.org/kb/unreal_log.owl#UnrealExperiment_Hnkn CameraStartPose = [ 0 = -3.3242106437683105 1 = -1.7523821592330933 2 = 1.5412843227386475 3 = -0.4662284851074219 4 = -0.24837808310985565 5 = -0.10210808366537094 6 = 0.8429195284843445 ] End = http://knowrob.org/kb/unreal_log.owl#timepoint_8.321757 CameraShortName = CharacterCamera_LPbi ObjInst = http://knowrob.org/kb/unreal_log.owl#KoellnMuesliCranberry_2pcO ObjShortName = KoellnMuesliCranberry_2pcO EventInst = http://knowrob.org/kb/unreal_log.owl#GraspingSomething_1uAu ObjType = knowrob:KoellnMuesliCranberry Start = http://knowrob.org/kb/unreal_log.owl#timepoint_6.434098 CameraInst = http://knowrob.org/kb/unreal_log.owl#CharacterCamera_LPbi

Best practices and Feedback

Best practices

- queries one by one, stacking them on one another. Once you know how some of them work, you can build on top of that.

- Ask questions if you have the chance to.

- Vizualization can be very helpful (if it works).

Feedback

If you have any suggestions or feedback about this tutorial, or if you encounter bugs, feel free to contact us. We appreciate any given feedback :)