This is an old revision of the document!

Table of Contents

Accessing Episodes recorded in Virtual Reality

This tutorial will teach you how to access the events and the data in the episodes that have been recorded previously in the Virtual Reality environment.

Abstract

In order to enable robots to perform every day activities with ease, they need to know when to do what. And who could be a better teacher then the human who wants the robots to perform these tasks? But instead of having us humans explain to the robots what to do, it is easier to just show them in a Virtual Reality environment, since the VR world can easily be adapted to whichever tasks we want to teach. Before we can teach the robots though, we have to understand what kind of data is being recorded and how we can access and inspect it, before we decide which parts of it to pass on to the robots. This tutorial will aim at teaching how to interact with episodic memories and inspect the data stored within using Open Ease and Prolog queries.

Introduction

We can record everything the human does in a Virtual Reality environment fairly precisely. The position of the head of the human can be tracked by tracking the headset itself, while the position of the hands can be mapped to the poistion of the joysticks. Every interaction between the hands of the human with the virtual environment is recorded. We can replay these recordings (episodes) and inspect them, learning from them how a human does certain every day activities. Why do we do certain tasks in a specific order? (E.g. in a table setting scenario, most people would put the plate down first and then get the cutlery.) How do we place objects? What orientation of objects do we tend to prefer? All of these things are small subconscious decisions we are not necessarily aware of, since we are just used to do things a certain way. How should a robot know them? This is where episodic memories come in. We can use the recorded data from Virtual Reality to teach robots to do every day activities without having to hard code every small little detail into the program of the robot (at least that's the goal). Before we can get there though, we need to understand and see how the data is stored, what we can learn from it and what information can be obtained in the first place.

Tutorials

Setup the Tutorial Environment

Go to the website http://data.open-ease.org, preferably using Mozilla Firefox or Chrome (other browsers could work as well but have not been tested). You do not need to log in into the website to complete this tutorial. In the upper right corner, select Experiment Selection and from the appearing drop down menu select VR Breakfast (EASE) Milestone.

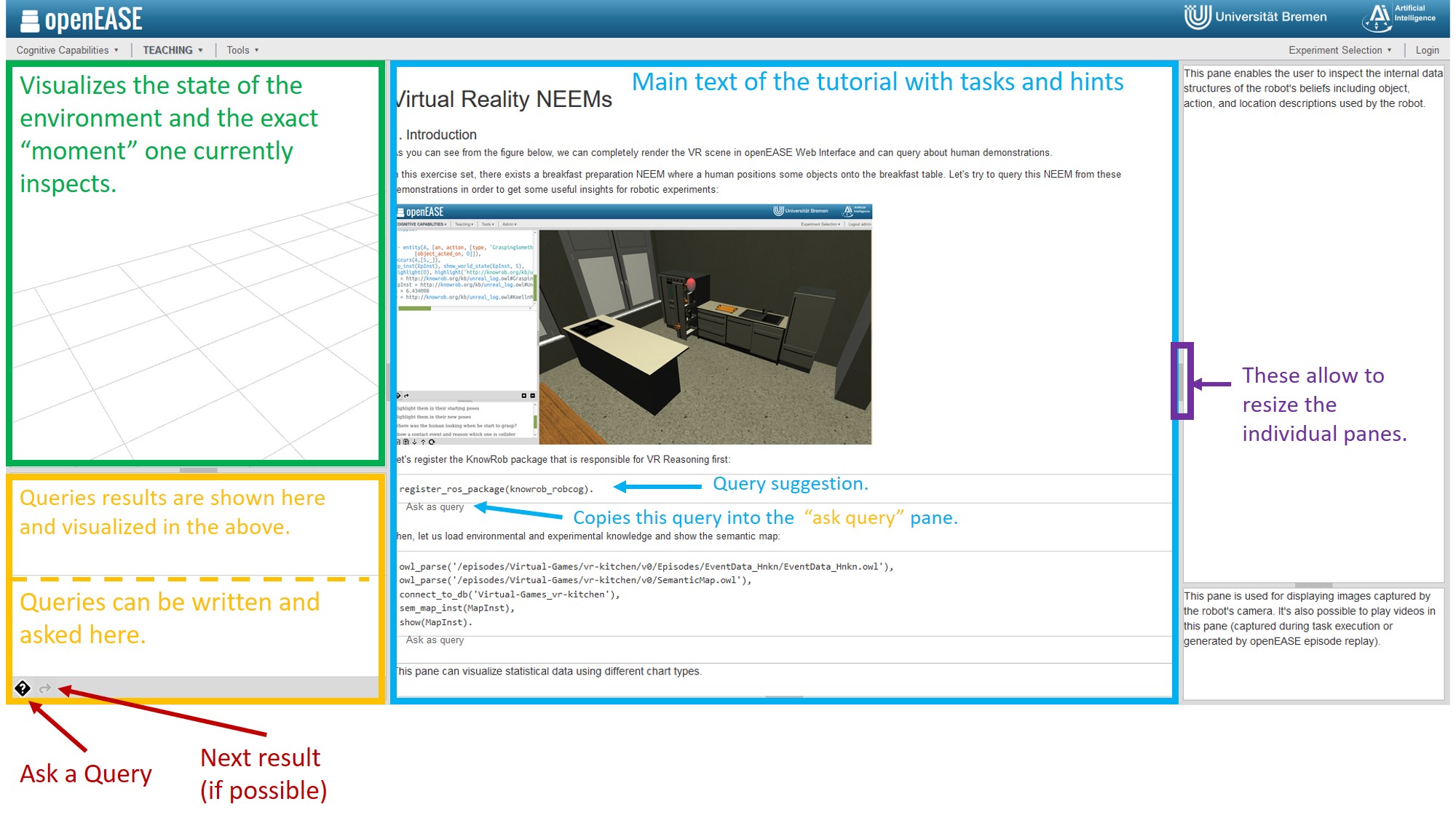

Click on Teaching in the menu bar, Tutorials and in the tutorials overview select Fall School VR Tutorials. Read and do this overview Tutorial which should look like this:

The tutorial will explain a few basics, as well as describe the goals of the following tasks. If you want to get more familiar with OpenEase first and this is your first encounter with OpenEase, KnowRob and Prolog, it is advisable to also read and do the other tutorials available in the tutorial overview.

The following description comments on the build-in Virtual Reality NEEMs Tutorial within OpenEase, by trying to explain in more detail what certain queries do and why they are used the way they are. You can of course try to complete the Tutorial on your own without the information which is provided here. It's up to you.

In order to be able to visualize what happened within an episode, we first need to load the environment in which that episode took place, as well as the episode in question. In short: we need to tell OpenEase what to load from the database and that we want the result to be visualized. In order to achieve this, select the Initialization query from the suggested queries in the bottom left corner. The entire query will then appear in the query editor, so that we can inspect which individual queries are called and edit them if needed be. In this case we can just keep the query as-is and submit it with the little button labled with a questionmark.

Now the environment, in this case a kitchen, will be loaded in the visualization overview panel. This might take some time to load, it can even take up several minutes, so don't worry if it is slow or some objects just appear as black cubes. This is normal.

Now let's discuss a few of the queries we have used so far:

register_ros_package(knowrob_robcog).

This query just loads the knowrob_robcog package, which contains some VR and NEEMS specific queries.

owl_parse('/episodes/Virtual-Games/vr-kitchen/v0/Episodes/EventData_Hnkn/EventData_Hnkn.owl'), owl_parse('/episodes/Virtual-Games/vr-kitchen/v0/SemanticMap.owl'), connect_to_db('Virtual-Games_vr-kitchen'), sem_map_inst(MapInst), show(MapInst).

Now these are a few queries chained together by a , which acts as an and in prolog. The fullstop . at the end is also very important. It signals the end of the query and you might get an error message if you forget to add it.

owl_parse parses the path of the EventData.owl file and the SemanticMap so what OpenEase knows which episode to load. The EventData contains every event that traspired in the episode. Every action like pick and place etc. is recorded as an Event in the EventData. The SemanticMap contains the initial state of the world, including which object is where, and how the world is set up. Aka if you do experiments in a kitchen, it will describe where all the kitchen Furniture and objects are, where the meshes are located etc. With these queries this information gets loaded into OpenEase.

connect_to_db connects to the MongoDB database which contains all the poses of the objects during the Events. The poses are mapped to timestamps.

sem_map_inst asks for the current instance of a SemanticMap.

show visualizes the SemanticMap in the visualization pane.

Note how after executing these queries you also get their results in the result pane above the question answering pane. In this case, we only had one varibale, namely MapInst (Everything starting with a capital letter is a variable for Prolog). This variable now contains the map instance of the semantic map that we have loaded and represents it like this:

MapInst = http://knowrob.org/kb/u_map.owl#SemanticEnvironmentMap_iW6S

Whenever we use variables, it can always be the case that there are multiple solutions to one question. If you want to know if this is the only solution or if there are more, click on the little arrow button right next to the questionmark button. There are solutions as long as you are able to click the button, and as long as you do not get a true or false statement in the result pane.

Tasks and Exercises

Now, let's see if you can figure out the following questions by yourself. There can be more then one way to ask the queries to obtain the result, so if you come up with a different solution then suggested here, that's totally fine. You can try to solve these without any hints at all. If you want some hints, under each task there will be some usefull queries mentioned that might help solving that particular task.

Task 1: Which type of objects are brought by the demonstrators?

Solution:

findall(_T, (entity(_A, [an, action, [type, 'GraspingSomething'], ['object_acted_on', O]]), owl_individual_of(O, _T)), ListOfObjects).

The result of the query is a very long list with many objects… here is a snippet:

ListOfObjects = [ 0 = owl:Thing 1 = owl:NamedIndividual 2 = knowrob:KoellnMuesliCranberry 3 = knowrob:BreakfastCereal 4 = knowrob:CerealFood 5 = knowrob:Granular ...

Task 2: What are these objects' initial poses?

Solution

Task 3: What are their final poses?

Solution

Task 4: Highlight the human when he starts to grasp an object

Solution

Conclusion

general todos

@TODO link Prolog, KnowRob, OpenEase whenever they are mentioned @TODO make sure the used terms for the individual OpenEase panels are correct