Introduction

There is a fundamental trade-off between the specificity and generality of robot plans. Specific plans are tailored for specific tasks, specific robots, specific objects, and specific environments. They can, therefore, make strong assumptions. For example, a robot that is supposed to set a breakfast table can assume that a bowl object should always be grasped from its top side, as grasping from the front or sideways would result in the object not fitting into the hand. This routine – always grasp from the top – is very simple, fast and robust but it only works for this particular context of task, object and environment. The disadvantage of this approach is that the specific routines are difficult to transfer to other contexts.

The other extreme is to write the plans such that they are very general. To do this, the plans have to include general statements such as “pick up an object”, where the object could be any object in any context. The advantage of this approach is that the plans are easily transferable but they require very general subroutines such as fetching any object in any context for any purpose. Often these general methods are not sufficiently performant for real-world applications.

In our paper “Self-specialization of General Robot Plans Based on Experience” we propose a more promising approach to competently deal with the trade-off between generality and specificity, namely, plan executives that are able to specialize general plans through running them for specific tasks and thereby learning appropriate specializations.

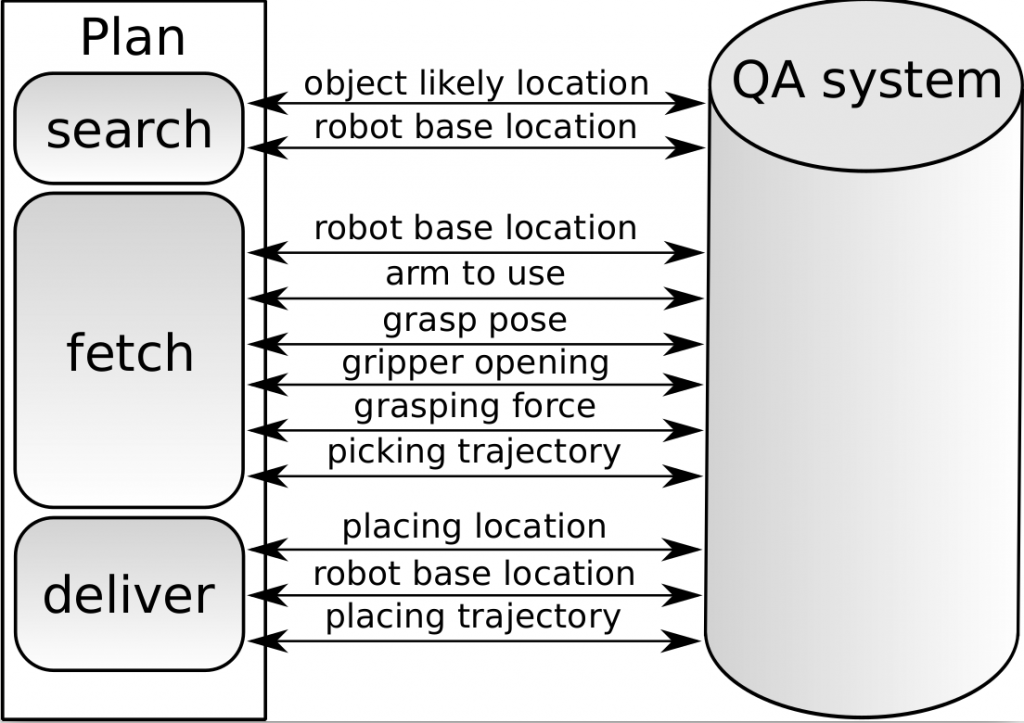

To this end, we frame the robot control problem for performing manipulation tasks in a certain way: we consider it to be the problem of inferring action parameterizations that imply successful execution of underdetermined tasks. For example, in the context of setting a table, relevant action parameters that determine if the outcome of the task is going to be successful or not, are the inferred locations of the required objects, grasping orientation and grasping points for each object, appropriate object placing locations and corresponding robot base locations. Thus, specializing plans in this setting means learning the distributions over these parameters that are implied by the specific problem domain.

To automate this process, we design the general plans such that the parameter inference tasks therein are represented explicitly, transparently and in a machine-understandable way. We also equip the plans with an experience recording mechanism that does not only record the low-level execution data but also the semantic steps of plan execution and their relations.

APPROACH AND ARCHITECTURE

There are many ways to solve the inference tasks for parameterizing mobile manipulation actions: brute-force search, using expert systems, logical reasoning, imitation learning and learning based on experience.

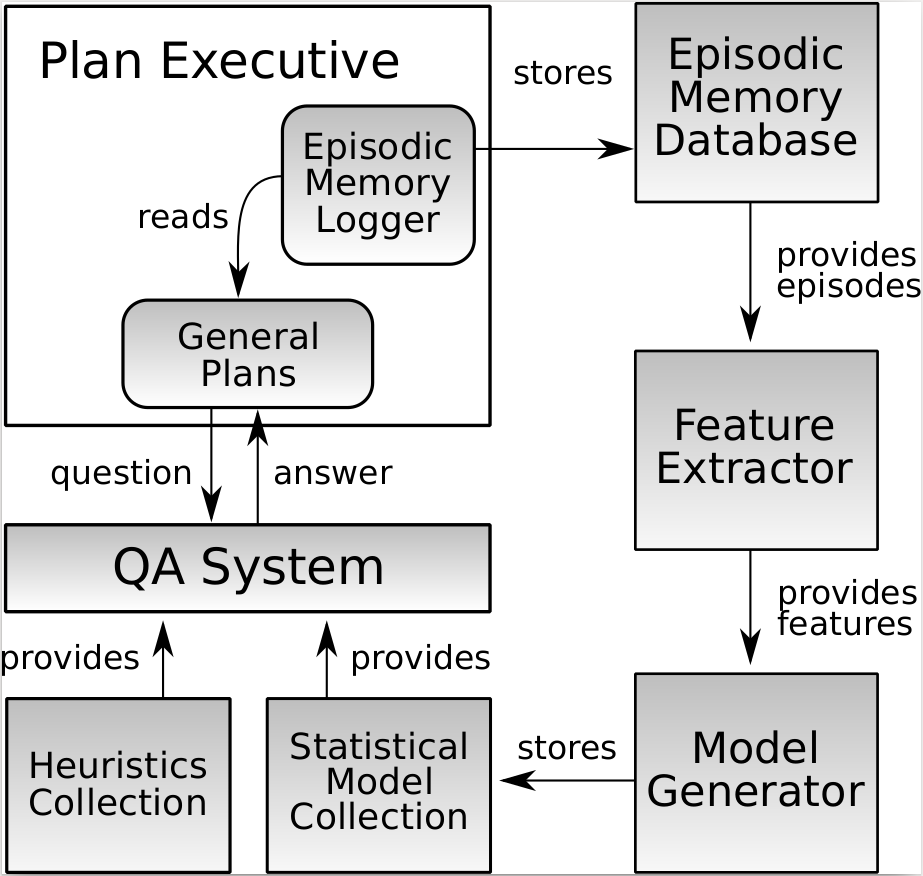

We apply the brute-force solver in our general plans. However, in order to make this approach sufficiently performant for real-world applications, we heavily rely on domain discretization and heuristics. For example, to infer how to position the robot’s base such that it can reach an object, we restrict the domain of all positions on the floor to only those that are at robot’s arm’s length away from the object. This reduces the search space, however, the specifics implied by the problem domain, e.g. finding the optimal distance to the object, are not being considered. Our, we apply statistical approaches in order to specialize the plan through learning the specifics implied by the task context. Figure 1 shows the architecture of the system that implements the approach presented in our paper.

plan into a specialized plan.

We formulate the inference tasks as questions that are answered by the Question Answering (QA) System. Previously, the queries were answered using the brute-force solver with the Heuristics Collection. In our paper, we implemented a Model Generator that generates learned models stored in the Statistical Model Collection, such that the QA system is able to select relevant models to answer the inference tasks. To be able to generate the models, the Plan Executive contains an Episodic Memory Logger, which is a mechanism for recording robot experiences that does not only log low-level data streams but also the semantically annotated steps of the plan and their relations. The experiences are stored in the Episodic Memory Database, from which the Feature Extractor module extracts features for making up supervised learning problems, which are then used by the Model Generator. First, the robot executes mobile manipulation tasks using the heuristics-based inference engine. Once a significant amount of data is collected, the models are generated. Next, the robot executes its tasks again, now using model-based inference and collects more data. The new data is used to update the existing models, which closes the loop. Thus, by generating the statistical models, we are transforming the general plan into a specialized plan which adapts to the objects and environment in which the agent is performing.

plan specification by querying the QA system

Results and Evaluation

For our evaluation scenario we decided to improve the pick-and-place performance of our PR2. The combination of Markov logic networks and naive Bayesian allows us reach an improvement of 50% letting the robot performing pick and place action. In addition, those models are interpretable. This allows us to see under which situation our robot’s action execution will fail. For instance, we found out that the robot is not able to grasp a bowl when the bowl is flipped upside down. The following video shows our data acquisition approach and the resulting improvement on the real robot.

Conclusion

This blog entry gave an overview about how we transform a general into a specialized plan using the robot’s experience. We presented an approach to utilize the robot’s experiment experiences to generate specialized plans from general ones. Our approach is an architecture which generates and updates statistical models to enable plan specialization For more information please feel free to read our paper.